I am using the DBMS_Cloud.create_external_table procedure to load/query a JSON file from my object storage bucket. It is working correctly with one small issue.

My JSON file (which is given to me from another company and cannot adjust) has two ‘sections’ of JSON. Adding a record delimiter for the first section is what I have in my code below, and again, it works. However, I cannot query anything with a where clause as the ‘second section’ of JSON contains the rest of the characters in one record.

This errors out in size, even if I use blob or clob column type. The JSON files I have are huge (up to 100 GB).

My goal was to create two external tables, the first table using the query below with the ‘1st section of JSON’, and another external table with the ‘2nd section of JSON'.

The issue is the ‘truncatecol’ value ‘true’ is not working to truncate the column when too many characters are there. I would assume this is because it is an external table and not loading directly to database.

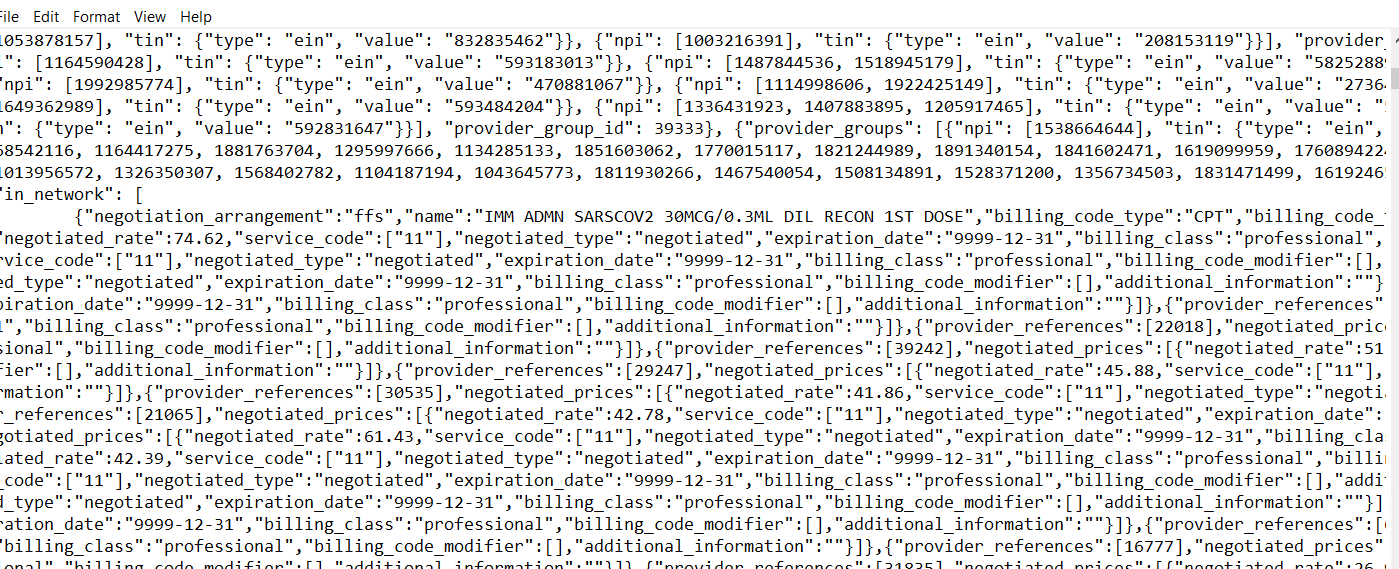

Any ideas how to truncate the column in an external table, or another way to exclude the 2nd section of JSON? For reference, I have a screenshot below where the 1st section ends and 2nd section starts. The 2nd section starts with "in_network":[

I am using Autonomous Data Warehouse 19c.

BEGIN

DBMS_CLOUD.CREATE_EXTERNAL_TABLE(

table_name => 'MY_TABLE',

credential_name => 'CREDENTIAL',

file_uri_list => 'https://objectstorage.XXX/n/XXX/b/XXX/o/UHCJson.json',

format => json_object('recorddelimiter' value '"provider_groups"', 'truncatecol' value 'true', 'rejectlimit' value 'unlimited, 'trimspaces' value 'lrtrim', 'removequotes' value 'true','ignoremissingcolumns' value 'true', 'removequotes' value 'true', 'blankasnull' value 'true','skipheaders' value 0, 'rejectlimit' value 'unlimited'),

column_list => 'NPI varchar(1000)'

);

END;

/